AI search is growing fast, but the measurement tools haven’t kept up. Agencies are pitching “AI optimisation” with confident dashboards and ranking reports — but the research shows AI gives different answers almost every time. Here’s what we can actually track right now, where the gaps are, and what to ask before signing anything.

Back in April last year, I wrote about five ways to get your business into Google’s AI Overview space. The advice in that piece still holds up — schema markup, answering customer questions clearly, showing genuine experience. If anything it matters more now.

What I didn’t cover was the measurement side. And that’s where things have become complicated.

Since April, AI search has spread well beyond Google’s AI Overviews. People are getting recommendations from ChatGPT, Perplexity, Claude, Microsoft Copilot. A growing number of agencies are now pitching “Generative Engine Optimisation” (GEO) services off the back of this, some with confident claims about AI rankings and impressive-looking dashboards. A few of our own clients have been approached.

I’ve spent the last few months digging into what’s actually measurable in this space. Some of what I’ve found is encouraging. Quite a lot of it should make business owners pause before signing anything.

How we got comfortable with Google (and why AI search is different)

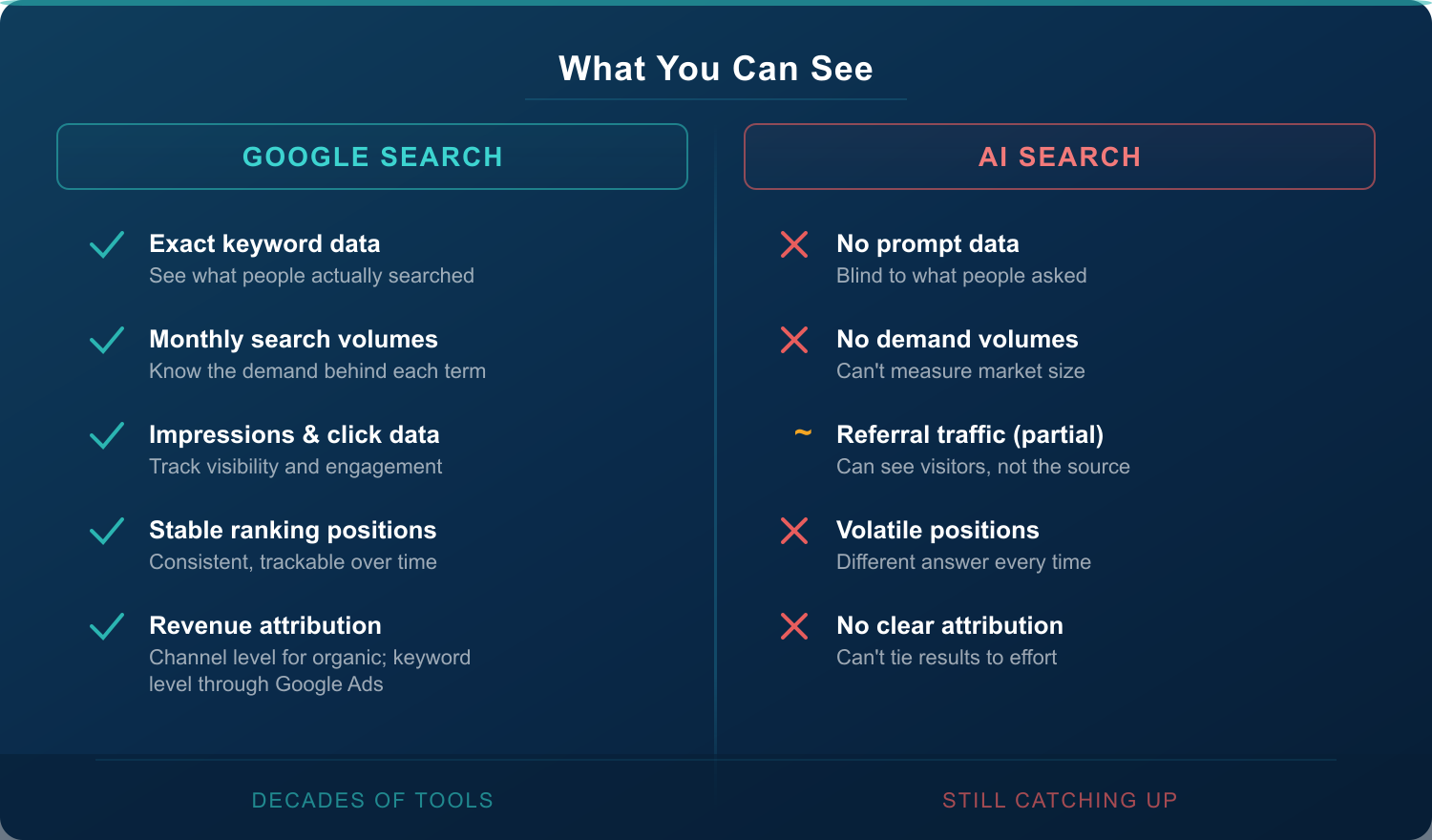

In Google land, we’ve had decades to build up the measurement tools. Google Search Console tells us which search terms are driving impressions and clicks. Google Analytics tracks what people do after they arrive. We know search volumes behind keywords and we can tie revenue back to specific search terms with reasonable confidence.

AI search has almost none of this.

There’s no “ChatGPT Search Console.” Now, Google Analytics can tell you someone arrived at your site from ChatGPT — it shows up as referral traffic from chatgpt.com, and you can follow those visitors through to a conversion. That part works and it’s worth keeping an eye on.

The gap is that you have no idea what they asked to get there. In Google, you can see that 1,200 people searched “scaffolding hire Auckland” last month and 40 of them clicked through to your site. With ChatGPT traffic, you can see the visitors arriving but you’re completely blind to the prompts behind them. You know someone walked through your door. You’ve got no idea which street they came from.

That missing piece — the demand side — is what makes this so much harder to measure than traditional SEO.

Google Search vs AI Search comparison — what measurement data is available for each

What we can actually measure right now

Brand monitoring through synthetic prompts. This is the main method most GEO tools use. They come up with prompts like “best scaffolding company in Auckland” or “top kitchen renovation firms in New Zealand,” run them through AI platforms, and check whether your brand shows up.

It works to a point. But notice that word: synthetic. These aren’t prompts from real people. They’re educated guesses. In Google we can see what people actually searched for. Here we’re making assumptions about what they might type. That’s a meaningful difference in how much confidence you can put in the data.

Referral traffic in GA4. As I mentioned, this does work. It’s patchy and it probably underreports, but it’s real data from real visitors. Set up a segment or custom report and track it over time.

Manual spot-checks. Open a fresh ChatGPT conversation, type the kind of question your customers might ask, see what comes back. Do it across a few platforms. It’s not scalable but it’s honest, and you’ll learn more from ten minutes of this than you will from most dashboards.

Where the problems are

AI gives different answers every time

Ask ChatGPT the same question twice and you’ll likely get different brands recommended in a different order. This isn’t a glitch — it’s how language models work. They’re probability engines, not databases.

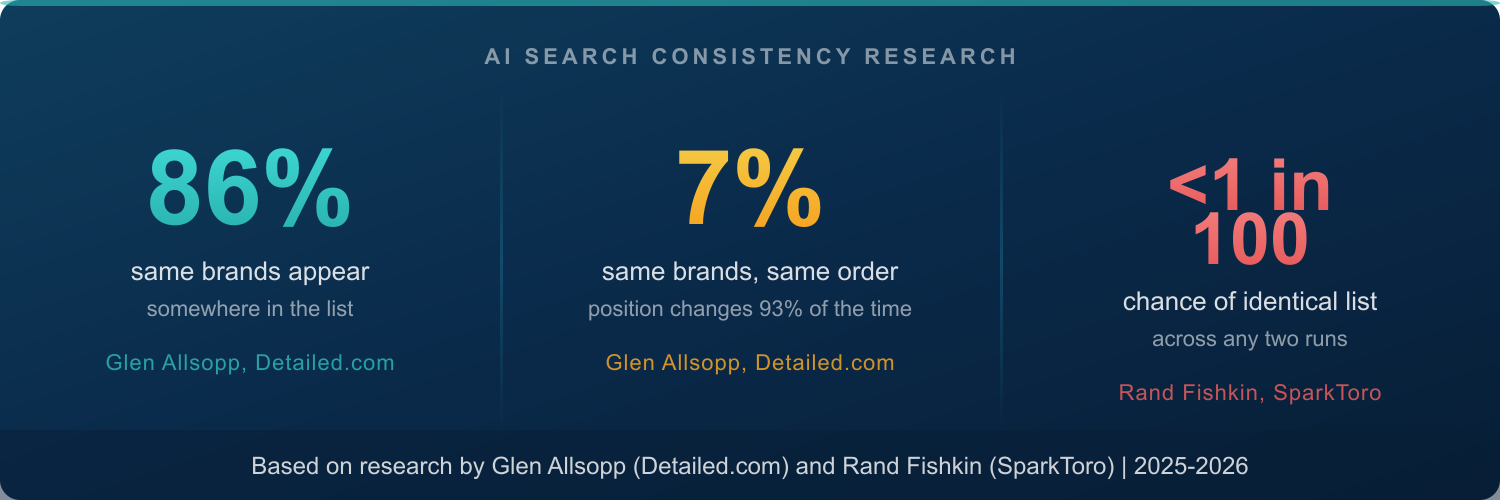

Glen Allsopp at Detailed.com has been tracking this with a live tool that asks ChatGPT identical questions over a hundred times each. While 86% of the same brands tend to show up somewhere across those responses, the same brands in the same order only appeared 7% of the time. So when someone tells you they’ve got your business “ranking #1 in ChatGPT,” the order is changing 93% of the time. That claim doesn’t mean much.

Rand Fishkin at SparkToro went further. His team had 600 volunteers run nearly 3,000 prompts across ChatGPT, Claude, and Google’s AI features. Less than a 1-in-100 chance of getting the same brand list twice. Roughly 1-in-1,000 for the same list in the same order. As Fishkin put it, any tool claiming to give you a “ranking position in AI” should be treated with serious caution.

AI Search Consistency Research — 86% same brands appear but only 7% in the same order, less than 1 in 100 chance of identical list

Nobody knows what prompts people are actually using

In Google, keyword research gives us real search terms with known monthly volumes. We know what people type because Google tells us.

In AI search, we’re guessing. And SparkToro’s research found that real human prompts are messy — even when people wanted the same thing, almost no two prompts looked alike. So the tidy synthetic prompts that GEO tools use (“best scaffolding company in Auckland”) might not reflect how your actual customers talk to ChatGPT at all.

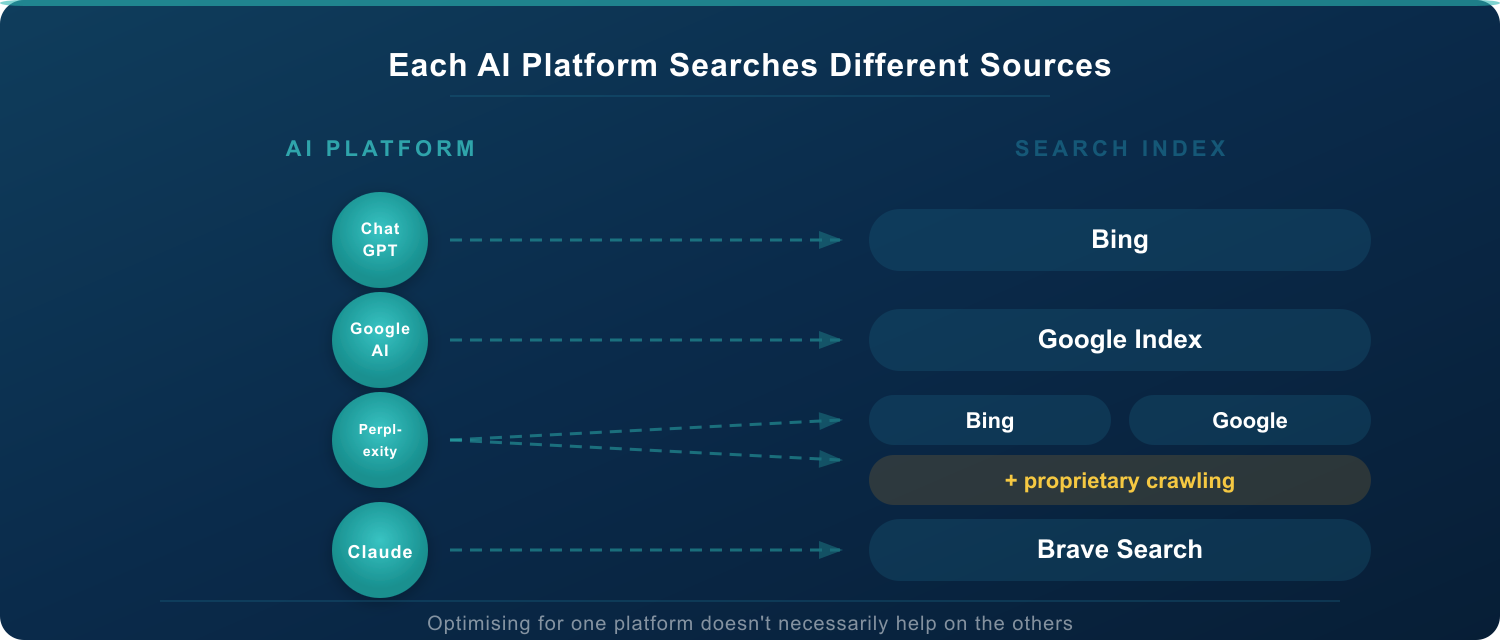

Each AI platform searches different sources

ChatGPT searches Bing. Google AI Overviews search Google’s own index. Perplexity pulls from multiple sources. Claude uses Brave Search. Optimising for one platform doesn’t necessarily help you on the others.

Each AI platform searches different sources — ChatGPT uses Bing, Google AI uses Google, Perplexity uses multiple sources, Claude uses Brave Search

The tracking tools don’t see what your customers see

This one’s worth knowing about. Many GEO tools use APIs to query AI platforms at scale — automated pipelines rather than actual browser sessions. Research from Otterly.AI has shown that API results differ significantly from what a real person sees when they type the same question into ChatGPT. Different configurations, different models, different web search behaviour.

Allsopp flagged the same thing. He deliberately doesn’t use the OpenAI API for his tracking because the results are too different from what real users get. Worth asking any GEO provider: is the data in your dashboard based on what my customers actually see, or what an API returned?

The encouraging bits

I don’t want to paint this as all doom and gloom, because there are some useful signals in the research.

Visibility percentage works. While exact positions jump around, how often a brand appears across many runs of the same type of prompt is statistically meaningful.

To make that concrete: say you’re a scaffolding company in Auckland. If we run “best scaffolding hire companies in Auckland” through ChatGPT fifty times and your brand appears in 35 of those responses, that’s a 70% visibility rate. Your competitor shows up 15 times out of 50 — that’s 30%. The position you appear in shifts each time and isn’t worth tracking. But that 70% vs 30% gap tells us something real.

And for New Zealand businesses in specific niches, there’s a smaller pool of options for AI to choose from. That means genuine authority shows through more consistently than it does in broad international categories.

The good SEO habits carry over. Schema markup, genuine expertise, consistent information across the web, content that answers real questions — this is what AI platforms look for when deciding who to recommend. The advice from my April post about E-E-A-T and locally relevant content hasn’t gone stale. It applies across Google, ChatGPT, and everywhere else.

Early movers seem to be pulling ahead. Research from Authoritas found that the top brands in a category are capturing a growing share of AI recommendations over time. The gap between visible and invisible appears to be widening, which suggests there’s value in starting now rather than waiting for the tools to mature.

What I’d suggest

We’re early. This is not Google Ads in 2026 where we can forecast returns with confidence. It’s more like Google Ads in 2005 — clearly going somewhere, but the tracking hasn’t caught up.

Keep doing what works for traditional search. Everything that builds authority in Google also feeds AI visibility. None of that effort is wasted regardless of where AI search goes.

Start watching your AI referral traffic. Set up the GA4 tracking. Run manual checks across ChatGPT, Google AI and Perplexity for your key services. Build a picture, but don’t expect the clean measurement you’re used to from Google.

Ask hard questions before spending on GEO services. If someone pitches you AI optimisation with ranking reports and confident projections, ask them how they deal with the fact that results change every time. Ask what their prompts are based on. Ask whether their tracking uses the actual ChatGPT interface or an API. Good providers will have solid answers. Others will change the subject.

Treat this as exploration, not a guaranteed-return campaign. We’re working with a small group of early clients who want to understand this channel. We’re upfront that it’s an investment in learning — in building presence and knowledge in a space that’s still taking shape. The businesses that do this work now will be better placed when the measurement tools eventually catch up.

Where we land

AI search is growing. The measurement around it hasn’t kept pace. There’s a gap between what some providers are promising and what anyone can actually prove right now.

Our advice: keep building genuine authority (it works everywhere), stay curious about the data that is available, and be careful about big commitments to tools or services that can’t yet show you a clear line from their work to your results.

If you’d like a second opinion on how AI search fits into your SEO strategy, or if you’d like to be part of our early exploration group, fill in the form below.

References

- Five Ways to Get Your Business Into Google’s AI Overview Space — Ark Advance, April 2025

- AI Search Response Consistency — live tracker — Glen Allsopp, Detailed.com (acquired by Ahrefs), updated hourly

- NEW Research: AIs are highly inconsistent when recommending brands or products — Rand Fishkin & Patrick O’Donnell, SparkToro, January 2026

- AI recommendation lists repeat less than 1% of the time — Search Engine Land, January 2026

- UI vs API: Why are the results from the API different from the UI in ChatGPT and Perplexity? — Otterly.AI, 2025

- Rand Fishkin proved AI recommendations are inconsistent — here’s why and how to fix it — Search Engine Land, February 2026